On today’s government networks, the real action is happening at the edge. We are seeing significant growth in edge-based processing power for government, with onboard analytics and dedicated artificial intelligence.

Just as the description implies, these dedicated systems are located near the network edge, often in modular containers which serve as low-level networked datacenters. Some are about the size of a minivan, and some are small enough to be mounted on utility poles.

That’s a striking development, especially at the federal level, because agencies recently have finished a multiyear effort to reduce, rather than increase, their total number of datacenters and standalone servers. The federal Data Center Optimization Initiative (DCOI) served as the catalyst for that era, with the federal government eliminating over 3,000 nontiered datacenters and over 200 mid-level tiered facilities from 2016 to late 2019.

However, now we have new data facilities popping up again. Here’s the difference: These new systems are dedicated to very specific missions. The full range of what those edge missions are, and how government is reacting, can be found in this newly published IDC document: Edge Computing, 5G, and AI — The Perfect Storm for Government Systems. The report also includes some use cases and survey data.

In short, governments are finding that keeping data where it’s collected, processing it right there and making decisions right there, means datacenter-class processing capabilities are sometimes needed at the edge, along with advanced analytics to help uncover details, trends, and correlations. And the main reason to do the processing at the edge is so that the decisions made there can immediately trigger other systems, such as border alerts, traffic light adjustments, heating or cooling changes and a range of other options.

The support for such efforts is substantial. The current President’s Management Agenda focuses on modernizing IT to increase productivity and security. It also sets progress goals and checkpoints for IT systems, including a push to optimize datacenters. Sometimes optimization means putting the processing closer to where it’s needed. In order to accomplish this, a new set of solutions is being created that live at the edge of government networks.

The Evolving Edge AI Stack

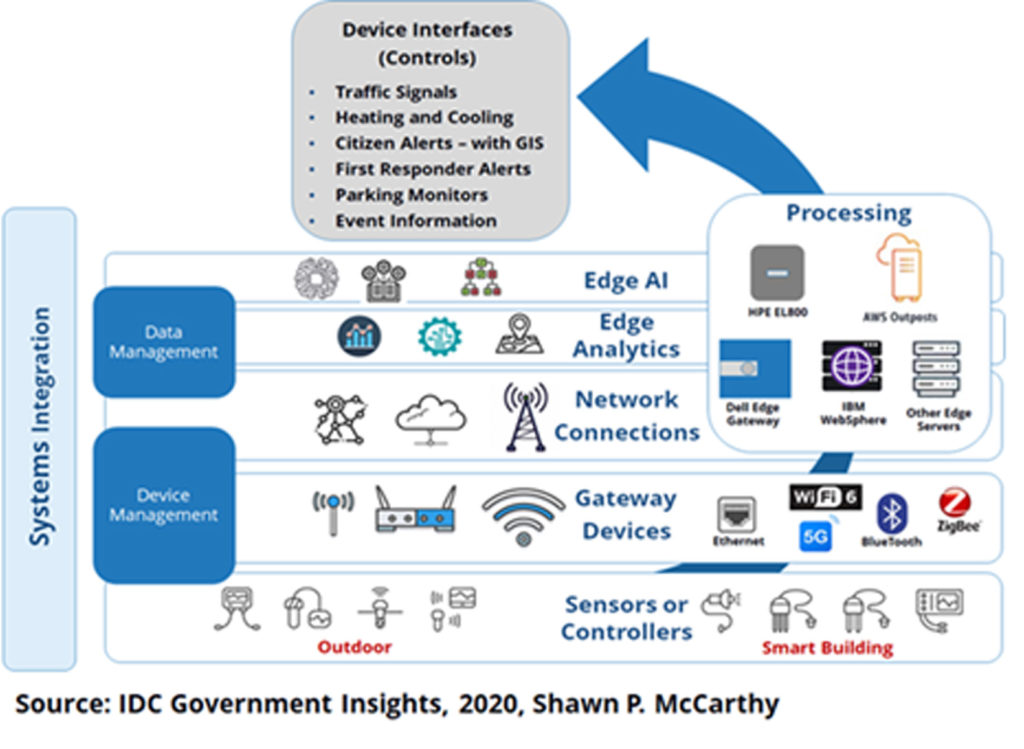

The graphic below shows a highly stylized version of an “AI at the edge – technology stack.” Let’s start at the bottom and work up. At the base are the sensors used to gather government data (whether outdoors or within government buildings). Everything else in the stack uses and builds upon data collected and held at the edge.

Level 2 (from the bottom) shows how sensor data is fed into the network by various gateway devices. The devices themselves can be short or long range in the way they connect to the gateways. Connections can be as basic as a single device with a hardwired Ethernet cable or they can link to multiple fully wireless gateways such as Wi-Fi, cell connections (4G or 5G), Bluetooth links, or ZigBee.

The gateways, in turn, feed data into larger networks (third level from the bottom), which can range from dedicated government networks, to leased IP transit lines, to cloud services (often simply infrastructure as a service), to cell systems. In turn, device management solutions reach across gateways and network connections to handle device monitoring, power consumption readings and settings, focusing on configuration management, anomaly detection, and more.

Data is carried over the network to where the processing is done. In the case of edge devices, this processing may be located just a few meters or several hundred meters away. Some cities have small edge processing capabilities located every few blocks. (Often these are small enough to be located on utility poles or in sidewalk-level containers that look similar to traffic control boxes.) In other cases, the edge servers are larger and located close to cell towers or other network points of presence. These larger installations can be dedicated solely to government data or they can be part of larger commercial edge processing services with virtualized computing instances that are sold to multiple industries.

The processing itself is handled by these edge-based servers. In some cases, these are small, one-off, dedicated devices. In other cases, where data collections and edge facilities are large enough, there can be racks of virtualized servers that function like (and are remotely managed like) traditional datacenters. Various applications can be installed along with this computing power, including analytics, which can be set up to continuously process incoming data and to highlight any changes, anomalies, security threats, or whatever else a government deems valuable and actionable.

Small Footprint AI Brings True Power to Edge Processing

If the advantage of edge computing is its proximity to where decisions need to be made, then AI is the foundation of those decisions. AI can be used to make assessments based on quick data analysis. Some governments use cloud-based AI solutions, pointed at where the data lives in its edge system. But others install the AI applications right where the data is collected to gain speed and agility.

Being able to quickly analyze, identify trigger points, and send immediate instructions, via APIs, to other government systems, is paramount for edge computing success. The type of AI used at edge location does not need to be complex. If the only thing a system needs to do is trigger signal changes or send alerts, it can be fairly bare bones and small-footprint.

Machine learning or human assistance can train each installed AI to make specific decisions based on the analyzed data. From there, various external devices can be set up to interface with the edge system. Depending on what the AI system decides, alerts can be sent to alter settings on traffic signals or parking monitors, environmental systems, citizen or police alert systems, and so forth.

As shown on the left side of the stack graphic, all of these levels are tied together by systems integrators. Every installation is different. Every government has its preferred vendors, brands, custom software patches, routines, and preferred programming languages. It takes a skilled IT service provider to tie everything together and make these complex systems work in concert. But, as with so many modern IT systems, edge computing will become increasingly standardized and commoditized in the years ahead. Eventually, many systems will be packaged as turnkey solutions, with preinstalled software and sets of subscription services capable of handling many of the things that still take extensive customization today.

But customized integration will always be part of the mix, especially as new solutions are added.

The blue arrow in the graphic points up through the whole stack, through the processing systems, and toward the last piece of the edge computing puzzle. Based on what the AI system sees and the actions it recommends, signals can be sent to other systems on the government network via multiple APIs. The timing of traffic signals can be adjusted, temperature controls in buildings can be adjusted, alerts can be sent, and more. The reason the system is built at the edge, and for speed, is so these signals can be sent faster and in more accurate circumstances.

For all of these reasons, Edge based processing, with rapidly evolving stacks of solutions and skilled systems integrators, is where the IOT action is for government. We’ve developed the webinar, Edge Computing, 5G and AI: Government’s Exponential Perfect Storm, to discuss this topic more in depth. To learn more, be sure to join us Tuesday, May 19th.